Since August 25, 2023, large online platforms such as Twitter (now X), TikTok, or Instagram have had to comply with the Digital Services Act (DSA): a set of rules approved by the European Union (EU) that aim to protect users and their rights. One of its objectives is to fight against online disinformation and illegal content, which has raised concerns about freedom of expression, censorship, and who will control these measures. It is a complex issue that affects us all in our daily digital lives, so we are here to answer key questions.

What will we talk about? Click to expand

- What does the DSA have to do with what we post on social media?

- Does the DSA promote censorship on social media content? Will my posts be deleted?

- Can online platforms restrict any post they want?

- What is the DSA’s approach to online disinformation? Is sharing it “banned”? Are any criteria established?

- How will the DSA maintain a fair balance with fundamental rights like freedom of speech?

- Who will make sure that companies comply with the DSA?

- Can a user contribute to the DSA application?

- Does the DSA only apply to the European Union? Can it be extended to more countries?

What does the DSA have to do with what we post on social media?

The Digital Services Act is a set of norms approved by the European Union with the aim of regulating digital services, such as social networks or online stores, to offer greater guarantees to EU users. With this regulation, the European Union introduces a series of obligations that platforms (such as Twitter, Facebook, Instagram, TikTok, LinkedIn, Zalando, Booking, or Amazon) must comply with or they will risk being sanctioned.

Two of the DSA’s main points are the dissemination of illegal content and disinformation through these digital platforms, among other aspects that you can consult here.

Does the DSA promote censorship on social media content? Will my posts be deleted?

Various posts on social networks have claimed that the measures proposed in the DSA “attack freedom of expression” and that the law “censors” citizens' publications. However, the Digital Services Act only establishes measures that cover the detection, identification, and removal (Art. 7) of those contents that are illegal. That is, posts that share illegal content (such as child sexual content, terrorist content, posts that promote hatred, sale of illegal products...).

The DSA does not provide a specific definition of “illegal content”, but rather leaves that decision and interpretation to national laws and those of the EU. Additionally, if content is illegal in a specific country, it will be restricted only in that territory. The rest of the posts that do not fall within this category of illegal content will be subject to the norms of each platform.

Can online platforms restrict any post they want?

The DSA's rules on how to deal with posts only apply to content that goes against the law. However, we should keep in mind that behind online platforms there are private companies that establish their own rules (the famous “terms and conditions”) on what can and cannot be shared on their services, and what mechanisms exist to restrict publications that do not follow its rules.

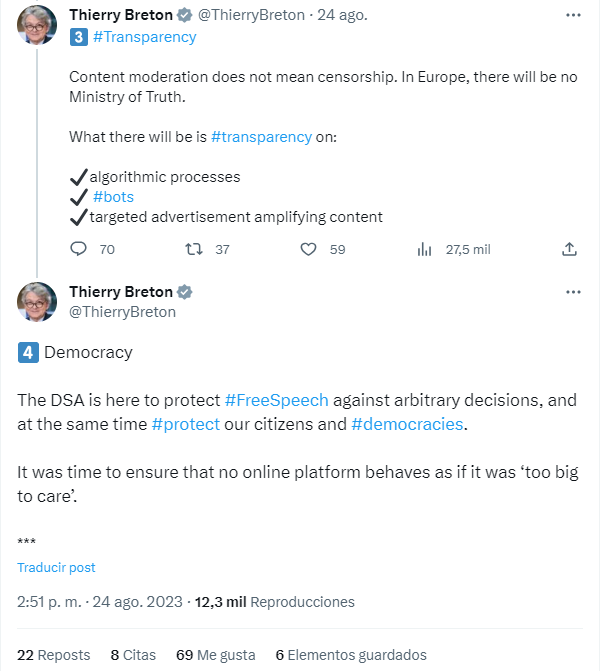

Many social media platforms such as Twitter or Instagram already had these content moderation processes, but until now the user did not have any type of guarantee that would protect their freedom of expression against the unfounded decisions of private platforms. This is where DSA comes into play.

The new regulation aims to improve the protection of users’ fundamental rights, especially freedom of expression. It thus demands an increase in transparency and avoidance of unjustified or discriminatory decisions by these private companies, as emphasized by the European Commissioner for the Internal Market Thierry Breton.

This implies making public and accessible the terms and conditions of the platform (Art. 14) so that users know what rules they are subject to and allow them to be aware of and challenge the platforms' decisions about their publications (Art. 17). That is, a social media platform like Facebook (which is a private company after all) will not be able to delete a user's post without notifying them of this decision, including the justification and how to appeal. Additionally, certain digital platforms will have to publish public reports on the number and type of posts they have moderated.

What is the DSA’s approach to online disinformation? Is sharing it “banned”? Are any criteria established?

Some users have shared that this law will be a way for institutions to "block due disinformation" content that "contradicts the authorities". Disinformation by itself is not prohibited by EU law. But as we have seen, it does impose measures to eliminate or encourage the elimination of 'illegal content'. Therefore, if a publication that spreads disinformation is not illegal, it will not be treated in this way, thus respecting freedom of expression. If a post spreads disinformation that contains illegal content, it will be bound to restrictions.

Now, there are certain considerations in the DSA that can help reduce disinformation on certain topics. The text establishes that large platforms must prevent their services from being exploited to disseminate or amplify content with negative effects on:

- The exercise of fundamental rights.

- Democratic processes, civic discourse and electoral processes, as well as public security.

- The protection of public health, minors, a person's physical and mental well-being, or that promote gender-based violence.

To this end, platforms must analyze their systems, algorithms, and design to carry out a risk assessment (Art. 34) and create a strategy to reduce this risk of exploitation to disseminate or amplify content with negative effects (Art. 35). The text specifies that, in doing so, they must focus not only on the risks associated with illegal content, but also on “how their services are used to disseminate or amplify misleading or deceptive content, including disinformation” (Rec. 84). That is, they must try to ensure that their service does not favor harmful but legal content, among which you can include disinformation publications if they have an impact on the aforementioned aspects. External audits (Art. 37) or codes of conduct (Art. 45) are some of the measures contemplated. Specifically, the current Code of Practice on Disinformation will become a way to demonstrate that these obligations to reduce the spread of harmful disinformation are met.

How will the DSA maintain a fair balance with fundamental rights like freedom of speech?

The protection of the freedom of expression is at the core of the regulation and this fundamental right is named on numerous occasions. For this reason, the obligations on illegal content seek a balance: they try to prevent platforms from abusing the removal of content for reasons of illegality but without escaping from their responsibilities.

Users have the option to challenge decisions made by online platforms to remove their content under the DSA, even when those decisions are based on the platforms' terms and conditions. Users have three options for dispute resolution:

In the case of very large online platforms and large online search engines (VLOPs and VLOSEs), users will be able to better understand the ways in which these platforms impact our societies and these platforms will be obliged to reduce those negative effects, including the dangers that may precisely attack fundamental rights such as freedom of expression.

Who will make sure that companies comply with the DSA?

The DSA does not regulate all companies equally, but establishes a range of obligations in four categories, which depend on the services provided by the companies and the number of users they have. Among them, the very large online search engines and platforms (VLOSEs and VLOPs) stand out, which are the applications that, due to their number of active users in the EU, (more than 45 million a month) can have a greater social impact, like Twitter and Google.

Based on this categorization, the authorities in charge of ensuring that each type of company complies with the law are also different. On the one hand, VLOPs and VLOSEs will be supervised by the European Commission, specifically, by a team of experts within the Directorate-General for Communications Networks, Content and Technology (CNECT). This aims to ensure strict supervision over the most influential digital platforms and that it is uniform across the EU. Their work will be key so that the DSA does not fall on deaf ears and it translates into positive changes for the users of the platforms.

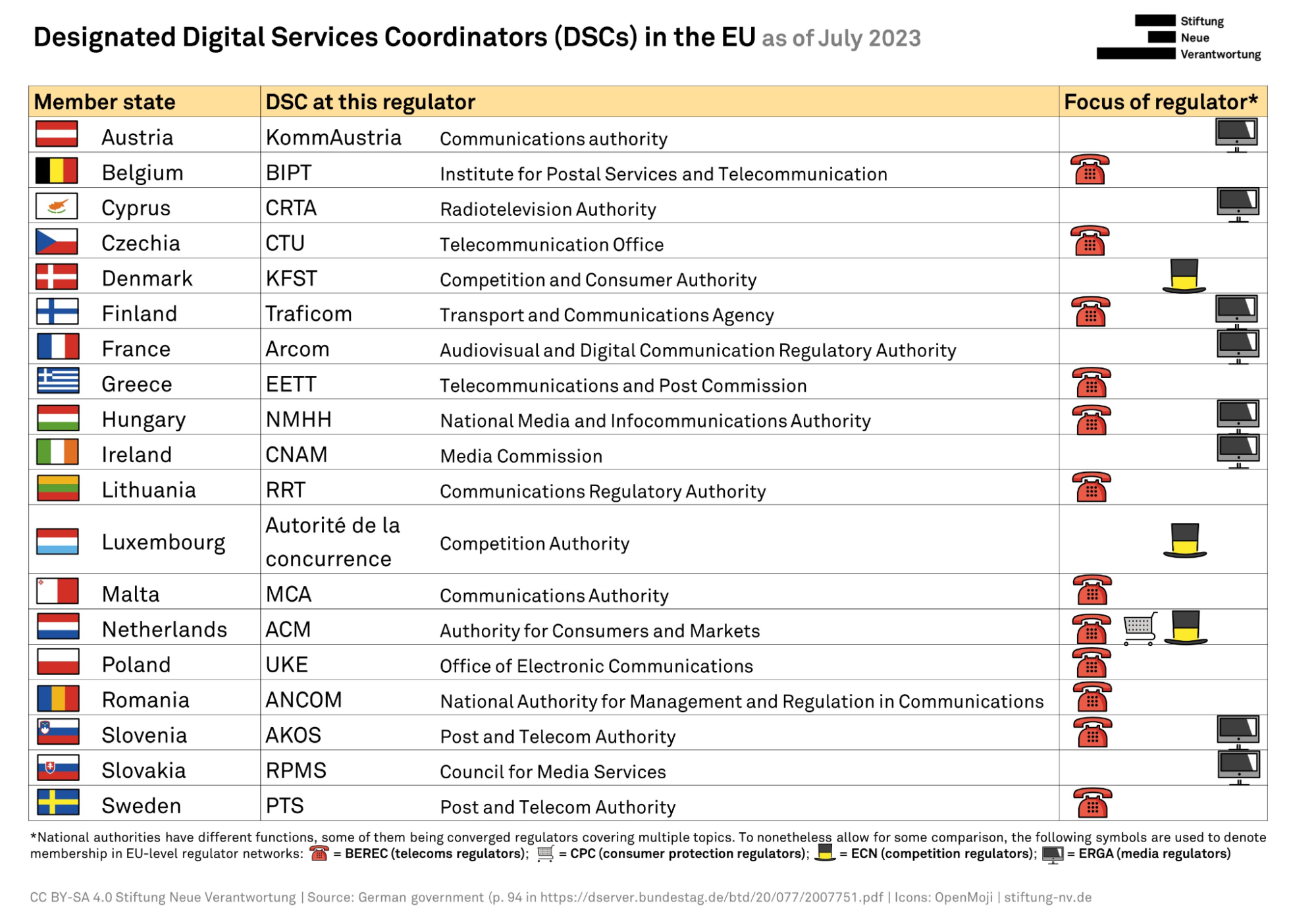

For other platforms, each EU member state will appoint an independent national Digital Services Coordinator that will be responsible for regulatory oversight in the country. This figure must be designated before February 17, 2024.

Some states have already announced who their Digital Services Coordinators will be. As the German government has noted in response to a question from a deputy, at least 19 countries have made this information public. Among the entities chosen are regulators in telecommunications services or competition matters. For example, Romania has appointed the National Authority for Management and Regulation in Communications (ANCOM, for its acronym in Romanian), Ireland has appointed the Media Commission and Denmark the Competition and Consumer Authority. In this graph made by Stiftung Neue Verantwortung you can consult the coordinators included in the document.

The representatives of the digital services coordinators for each EU Member State will form the European Digital Services Board, an independent advisory group at EU level that will oversee the correct application of the regulation.

The text also recognizes other figures who can ensure compliance with the regulation, such as trusted flaggers: entities specialized in detecting illegal content. These flaggers will be designated by the digital services coordinator of each State and their function is to notify the platforms when they detect publications that do not comply with the law.

Can a user contribute to the DSA application?

The DSA also seeks the participation of civil society. To do this, platforms will have to establish channels through which any user can report the presence of illegal content. In addition, companies will have to publish reports on the notifications received and the actions taken after receiving these notices.

This is especially important since the DSA does not require platforms to monitor absolutely everything that is published on their services. Platforms have no responsibility for illegal content of which they are unaware. Therefore, these notification mechanisms to which users will have access give the possibility of recording the existence of this illegal content. Only when a platform has “effective knowledge” that content is illegal does it begin to be responsible for it.

Does the DSA only apply to the European Union? Can it be extended to more countries?

The DSA is a Regulation of the European Union, so it only applies to EU member states. It includes not only the services of technology companies based in European Union countries, but any service that operates here.

However, it is expected that this package of measures will also have effects in other countries through what is known as the Brussels effect, by which EU measures end up being transferred to the rest of the world. This was the case of the EU General Data Protection Regulation, as many platforms decided to extend the changes they implemented to comply with this regulation to other countries in which they operated.

For example, Wikimedia Foundation (the organization responsible for Wikipedia, one of the large platforms to which the law already applies) has assured that the changes it has introduced as a result of the DSA will be implemented in the rest of the world. “The rules and processes governing Wikimedia projects around the world, including changes in response to the DSA, are as universal as possible,” the organization has assured, according to Euronews. Snapchat has also stated that it will implement some of its measures globally in the wake of the DSA, according to TechCrunch.

On the other hand, this Brussels effect could also cause this first effort by the DSA to regulate large technology companies to inspire other countries to create their own laws similar to the DSA, taking European regulation as an example.