Since August 25, online platforms such as Twitter (now X), Instagram, TikTok, Amazon, and Wikipedia as well as search engines such as Google Search or Bing have had to comply with the Digital Services Act (DSA): a set of rules and measures approved by the European Union (EU) to regulate the digital space. The text includes rights for users and establishes obligations for tech companies according to their size and nature, such as content moderation or algorithmic transparency, as well as sanctions if they fail to comply.

Some platforms are already adjusting their policies to meet their new responsibilities, while others have criticized the classification imposed on them. We analyze what the DSA consists of, what it regulates and how it affects us as users.

What will we talk about? Click to expand

- What is the DSA? What is its objective?

- When does it enter into force? What are the key dates? Why now?

- What does the DSA aim to regulate? What measures does it propose?

- Which companies are subject to the DSA? How have they positioned themselves?

- What changes are the platforms implementing? How will it affect me in my daily life?

What is the DSA? What is its objective?

The Digital Services Act is a set of rules approved by the European Union aimed at regulating digital services, such as social networks or online stores, to ensure greater guarantees to EU users. The text includes a series of obligations for tech companies, such as tools for content moderation and greater transparency in algorithms and in personal data processing.

The DSA came into effect along the Digital Markets Act (DMA), the latter being more competition-focused. Both form part of what is known as the digital services package, a change in the European regulatory landscape that seeks to create a common framework for large technology companies.

Before the DSA and the DMA, there only existed a directive from 23 years ago (Directive on electronic commerce), back when the Internet was very different from today's reality, and which set some general standards to be followed. The great changes in the digital world that have occurred in recent years, as well as the emergence of new threats, have made the arrival of these new regulations necessary, according to the EU.

It is expected that this package of measures will also have effect in other countries through what is known as the Brussels effect, whereby EU regulation ends up being transferred to the rest of the world due to the relevance of the European market. Therefore, for practical purposes, this new regulation represents a first global effort to regulate large technology companies.

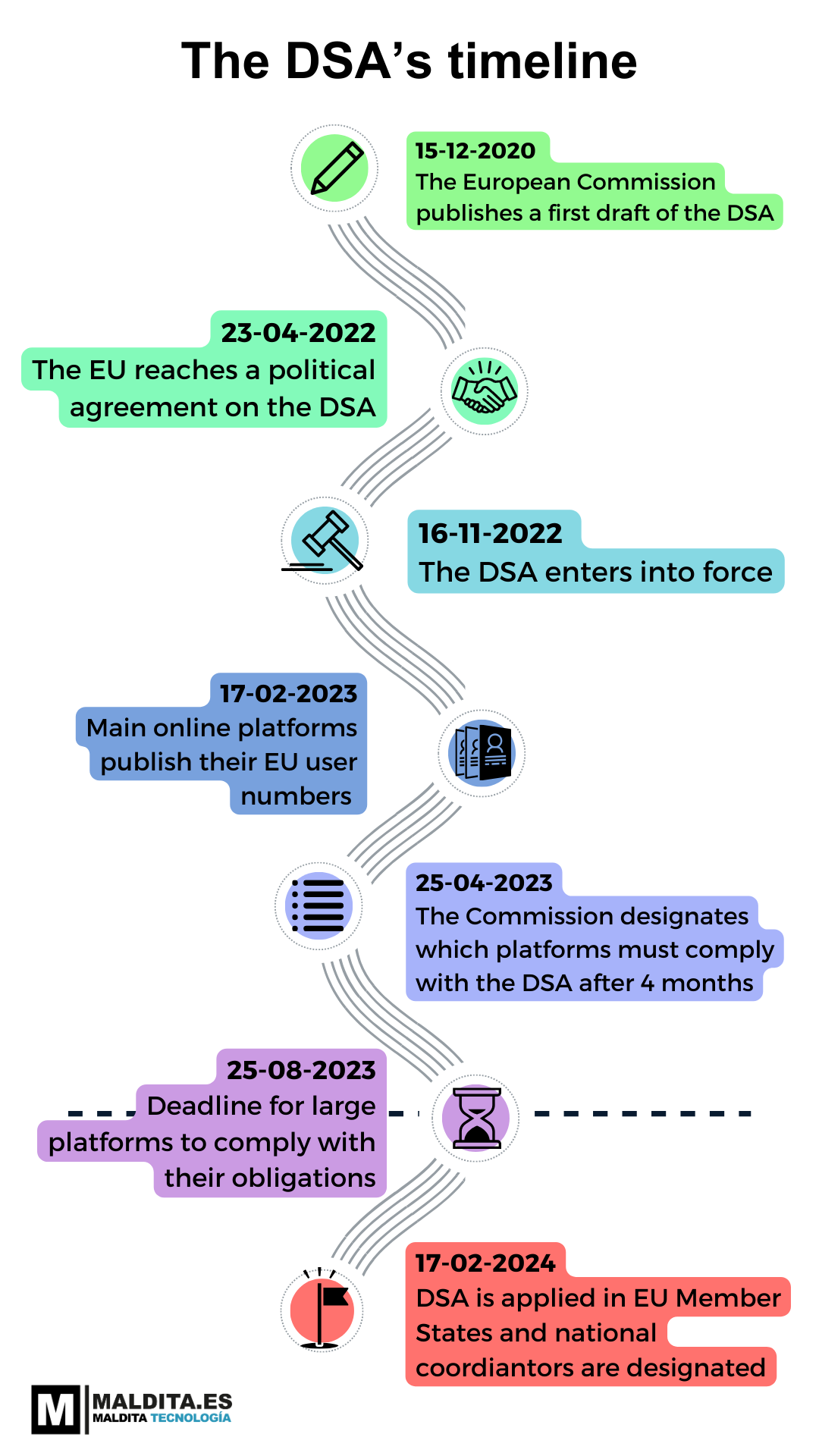

When does it enter into force? What are the key dates? Why now?

The regulation became effective on November 16, 2022, although its application is carried out progressively and in different phases. Among the different considerations established by the law, the DSA points out that the main digital platforms (those that have more than 45 million monthly active users in Europe) have to comply with the new regulation in advance, specifically as of August 25, 2023, while the rest of the companies will have to comply from February 17, 2024.

But the DSA's journey dates back to December 2020, when the European Commission published its first proposal. The text was approved two years later. This illustration shows a timeline of major DSA milestones and key dates, keeping an eye on the future as well.

What does the DSA aim to regulate? What measures does it propose?

With this act, the European Union intends to guarantee the protection of EU citizens’ online rights and "to create and maintain a level playing field” for this type of company. To do so, the document introduces a series of obligations that platforms must comply with to avoid being sanctioned, and which covers different topics. Among them are: disinformation on social networks, the dissemination of illegal content, transparency measures and responsibilities on data collection.

- Disinformation: the DSA reflects the EU’s concern regarding systemic risks, or that certain services may be used in a way that amplifies harmful content. Two of these risks are the dissemination of content which can have negative effects on civic discourse and electoral processes or on public health and the physical and mental well-being of a person. Hoaxes about the safety of vaccines or disinformation about alleged rigged elections are examples of content of this type.

To combat this danger, the DSA mandates that large platforms must demonstrate their efforts to evaluate and mitigate this systemic risk, that is, to explain how they try to prevent their services from being used to disseminate or amplify this content. For example, through the adoption of codes of conduct, among which will be the European Code of Practice against Disinformation (of which Maldita.es is part and which until now compliance has been voluntary). It also establishes specific measures, such as the prohibition of showing targeted ads using special categories of personal data, such as the political orientation of users. Other obligations include the application of mechanisms to reduce these systemic risks, independent audits, or the creation of crisis protocols.

- Illegal content: “What is illegal offline should be illegal online” is a maxim at the core of the DSA. Thus, it pays special attention to the moderation of illegal content, such as publications that promote hate speech, terrorism, discrimination, or child sexual content. While platforms cannot be obliged to monitor everything they host and they cannot be held accountable for harmful content they are not aware of, the DSA requires large platforms to make annual and public reports on their moderation decisions, and to establish channels where users can report these types of post.

- Transparency: this is another of the cornerstones of the law, specifically regarding their recommendation algorithms and decision processes. Not only companies have to provide their data and algorithms at European authorities' request, but also the functioning of these algorithms must be detailed in their terms and conditions, and what options exist to modify them, among various transparency obligations.

- Data collection: the law requires online platforms to offer recommendation systems not based on profiling. It also bans targeted advertising based on profiling using special categories of personal data, such as sexual orientation, political ideology, or religious beliefs.

If digital services fail to comply with their responsibilities, the DSA establishes sanctions, including fines of up to 6% of the annual worldwide turnover of the company.

Which companies are subject to the DSA? How have they positioned themselves?

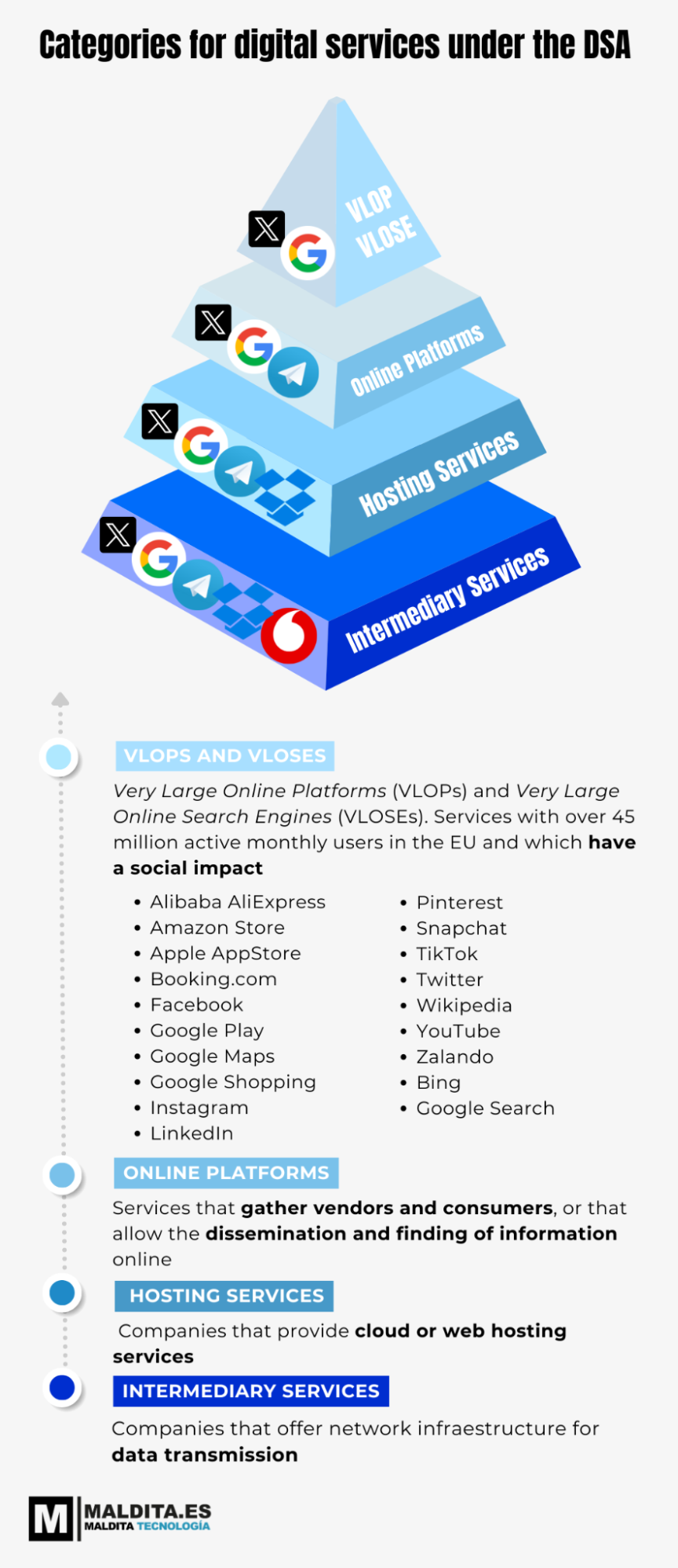

The DSA sets forth “tiered obligations” to match the role, size and impact in the online ecosystem of digital services. These four categories of obligations:

- Intermediary services: companies offering network infrastructure for data transmission, such as Internet service providers.

- Hosting services: companies offering cloud or web hosting services.

- Online platforms and search engines: bringing together sellers and consumers such as online marketplaces, app stores, collaborative economy platforms and social media platforms.

- Very large online platforms (VLOPs) and Very Large Online Search Engines (VLOSEs): those whose impact can be greater given their number of users in the EU (over 45 million monthly active users), for instance, Twitter or Google. Currently, 19 services fall under this category.

All digital services (or intermediaries) must comply with basic measures, such as making their terms and conditions public. As we go upwards in the categories, more demanding obligations are added. That is, companies in the highest category (VLOPs and VLOSEs) must comply with the specific measures for that group and with all the accumulated obligations of the three levels below.

The European Commission is in charge of designating which services fall into each category considering reported monthly users and, although many platforms are already adapting to this regulation, other companies such as Amazon or Zalando have been critical of the category imposed on them.

What changes are the platforms implementing? How will it affect me in my daily life?

Since August 25, VLOPs and VLOSEs have had to comply with the DSA in EU territory if they don’t want to get sanctioned. Some platforms have already communicated changes they have implemented in order to comply with EU legislation:

- If you are a TikTok user, you'll now be able to deactivate its recommendation algorithm. The most relevant content according to the region you are in will be shown in your “For You” and “Live” pages, where you can see videos in chronological order. You may also update your “For You” feed so that the recommendation system acts as if you had just signed up for the app. In other words, if you are not happy with the videos that TikTok is showing you, you can reset the algorithm. A channel has been added to report illegal content, and so users can access more information about its moderation policy, the recommendation system and advertising on the platform, among others. For children under 17 years of age, the application will not show personalized ads. Here you can check more details.

- In the case of Twitter, you will be able to see which posts have violated the platform's rules, what type of content has been removed and the number of accounts suspended in the transparency reports that the platform has resumed in view of “changes in the regulatory landscape”, as Twitter communications claim. Twitter has also included new features, such as a tool to report illegal content in the EU, a channel to appeal if your content has been flagged as illegal, and information on dispute resolutions if you believe that some of your content has been improperly restricted.

- If you use Facebook or Instagram, Meta has announced that from now on you can consult the functioning of the recommendation algorithms and decide to deactivate them. If you go for this option, content published by profiles you follow will appear in chronological order, and search results will be adjusted to the keywords you introduce. You’ll also have access to new tools for reporting content, and Meta will provide more information if they restrict any of your posts.

- When you buy on Amazon you’ll have more information available about sellers and channels to report fraudulent products, according to The Wall Street Journal.

- If you use Google’s services like Google Search or YouTube, you’ll be able to see more information on advertisers in the Ads Transparency Center, as defined in the regulation. You will also understand more about Google’s policies and its moderation of illegal content. You can learn more about this here.

- Regarding Snapchat, you can now deactivate the recommendation algorithm and consult why certain videos are shown to you. The app has also included notification and channels for appealing content moderation decisions, and will stop showing personalized ads to users under 18. You can learn more here.

There are also basic requirements that affect the rest of the services we use. For instance, any online platform will have to provide more information on advertisers and will implement measures to avoid fraudulent ads. Moreover, if we’ve been sold a fraudulent product, the platform will warn us when they are aware of it. There are measures that aim to end bad practices such as false reviews on platforms like Amazon and hold the platforms responsible for fraud if they play an active role in the resale of online tickets.

The law also wants to put a stop to dark patterns: strategies based on the consumer experience that aim to trick users into doing something they don't really want to do (the typical situation where you go to book a hotel room and the page alerts you that there is only one left and that there are other people seeing the same offer as you). According to the law, we will see fewer messages that rush us to buy.